Murat Işık, CEO and co-founder of Noah Labs, believes the next major cyberwar will not be fought with chatbots or public consumer tools. It will happen inside secure government networks, defense contractors, banks, and regulated industries that cannot rikkk sending sensitive information into public cloud systems.

Işık was founder in StartX, Stanford’s startup accelerator and he previously served as a Research Scholar and PhD researcher at Stanford University, where he worked under Professors Sadasivan Shankar and Newton Howard on computing and energy systems research connected to the CompJoules project. Işık is also a Fellow at the Foresight Institute and a member of the Turkish American Scientists and Scholars Association. He earned a master’s degree in Electrical and Computer Engineering from Drexel University.

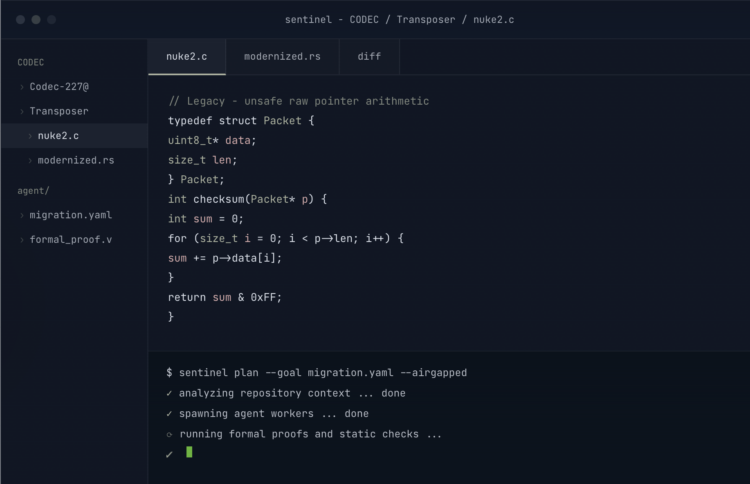

Işık described Noah Labs as “the AI native IDE for government and regulated industry,” comparing the company’s platform to Cursor-style development environments adapted for organizations that require strict control over infrastructure, data residency, and security. The whole point of the company is to offer “on-prem” or on-premises access to AI tooling that is not accessible by anyone outside the organization. This is obviously an interesting solution for militaries and governments that might not want to paste battle plans into ChatGPT. Their first product, Sentinel, brings AI coding expertise to secure clients.

“We started on this problem with code modernization and legacy code optimization, because governments, defense, banking, all the regulated industries have a bunch of code bases, legacy code bases. And we were modernizing from COBOL to Java, C to RAS, Python to CUDA. And we saw that they are not able to use the cloud native platforms due to the kind of work culture they had,” he said. “And even when they work with the local LLMs, they are receiving a kind of a super small amount of the accuracy.”

Noah Labs initially focused on translating and optimizing those systems, converting older codebases into modern architectures like Rust, CUDA, and Java. But the company quickly encountered another challenge: many regulated organizations were unable or unwilling to use public cloud AI systems at all.

Unlike most consumer AI tools, Sentinel is designed to run entirely inside customer infrastructure. The company avoids external access to customer systems and emphasizes local deployment inside approved hardware environments.

“We do the on-prem deployment,” kk said. “We don’t have any access outside the company.”

The hardware requirements can be substantial. Işık noted that some deployments may require access to hundreds of GPUs depending on model size and workload, although many larger organizations already maintain their own infrastructure or rent compute through providers like Lambda Labs, AWS, Azure, and Google Cloud while maintaining domestic data residency requirements.

The company is already working with organizations tied to Lockheed Martin and Stanford Federal Credit Union as early customers and backers.

“Working with the government is the best way to get those government contracts,” he said. “Otherwise, it’s hard to build up the relationship.”

Through a previous company, Işık worked with the U.S. Air Force on foundational AI models tied to agentic systems. He described the Air Force as one of the more technically aggressive organizations within the U.S. government when it comes to AI experimentation and deployment.

Noah Labs’ next major project moves beyond coding assistants entirely. The company is currently building what Işık calls an “Autonomous Software Operator,” or ASO, designed to automate large-scale software engineering tasks through coordinated AI agents.

“I want to build up the OpenClaw version for the regulated industry,” he said. “Ten agents, you give a prompt and get the project completed. No human intervention.”

The project reflects a broader trend inside the AI sector where companies are shifting away from simple chatbot interfaces toward multi-agent systems capable of coordinating workflows, writing code, validating outputs, and operating semi-independently inside enterprise environments.

Işık argued that the larger bottleneck in AI is no longer raw model capability but data quality and infrastructure.

“Today model capability is not the biggest problem,” he said. “The biggest problem is the data handling and data processing for your models and how you are going to train them.”

According to Işık, Noah Labs’ current systems are achieving roughly 60 to 70 percent operational accuracy in some internal testing, with the company targeting significantly higher reliability later this year before wider deployment.

Longer term, Işık wants Noah Labs systems deployed inside active defense environments. He specifically referenced the Navy’s Aegis Combat System and Army Software Factory initiatives as possible integration targets.

“If we can be battlefield validated,” he said, “I think that’s kind of a dream I have at Noah Labs.”